Modeling Social Systems: Transparency, Reproducibility, and Responsibility

By the Casa Matemática Oaxaca Workgroup on Collective Social Phenomena

Researchers frequently use mathematical modeling to enrich theory, guide interventions, and inform policy on a variety of issues, from voting behavior to economic inequality and urban development. Modeling’s expanding role in the social sciences introduces novel opportunities and places new responsibilities on the creation, communication, and interpretation of the models in question. Because these models influence decisions that impact millions of lives, developers must consider transparency, reproducibility, and humility — especially under the constraints of privacy and limited data.

Mathematical modeling in the social sciences is uniquely challenging; researchers lack controlled experiments, and observational data are often sparse, noisy, or systematically missing. Social variables are also subject to substantial measurement error, and populations are heterogeneous and often nonstationary, with behaviors and definitions that change over time. In many contexts, essential social data are simply unavailable at the local scales where questions matter most — especially for sensitive issues like crime or corruption. Modelers may be forced to rely on coarse aggregates or assumptions in place of missing empirical grounding. Given these limitations, how can we build models that support science and decision-making processes in transparent and responsible ways?

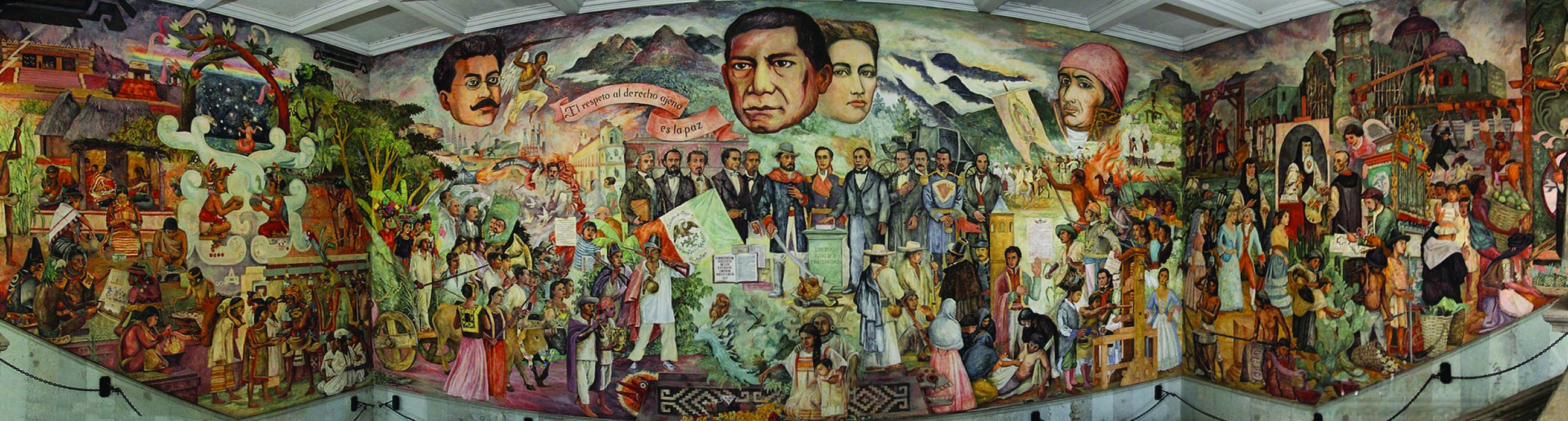

This question motivated a recent workshop titled “Collective Social Phenomena: Dynamics and Data,” which took place last June at Casa Matemática Oaxaca in Mexico (see Figure 1). During the weeklong event, mathematicians, statisticians, engineers, and computational social scientists discussed modeling frameworks, ethical challenges, and disciplinary norms. Based on these conversations, participants distilled six guiding principles for transparent and responsible modeling of social systems:

- Be explicit about modeling aims

- Clearly communicate model assumptions and researcher perspectives

- Match models to real-world stakes

- Quantify and communicate uncertainty

- Share code and data

- Collaborate across disciplines and perspectives.

Here, we expand on these principles with examples from the workshop and the broader literature [1]. We hope that they will serve as useful starting points for quantitative modelers of social systems.

Why Model?

In the famed words of computational social scientist Paul Smaldino, “Models are stupid, and we need more of them” [5]. Part of Smaldino’s argument is that models may serve purposes other than the detailed reconstruction of their target systems — purposes that might warrant considerable simplifications. The appropriateness of a given simplification depends on the intended objective of the model. In the social domain, scientists use models to explore possible connections between social mechanisms, explain observed individual or collective behaviors, and predict the outcomes of social processes or interventions. These purposes are distinct from a model’s target system or application area and can address questions of social conformity, voting behavior, economic inequality, or urban development in exploratory, explanatory, or predictive forms.

Exploratory models show that simple individual preferences can generate stark, large-scale patterns. Because they explore consequences that could arise from minimal assumptions, they illustrate both the value and risks of counterfactual modeling in the social sciences. In contrast, explanatory models seek to capture the proposed mechanisms behind observed phenomena; for example, compartmental epidemic models demonstrate the way in which simple infection and recovery processes can reproduce familiar outbreak patterns and support scenario analysis for public health interventions. Both types of models challenge intuition by making assumptions explicit and identifying the places where common narratives break down — especially in domains where outcomes emerge from network structures, feedback, or randomness that defy straightforward storytelling [6].

Unlike exploratory and explanatory work, predictive models aim to forecast future outcomes based on past data, often without representing underlying mechanisms. Prediction in the social sciences is especially difficult because data are frequently partial or biased, behaviors shift over time, and highly flexible models can be hard to interpret or trust in policy-based contexts. Consider the 2017 “Fragile Families Challenge,” which asked 160 teams from across the world to utilize machine learning algorithms to predict six different life outcomes for children based on a common dataset [3]. The resulting models—which relied on widely different approaches—yielded surprisingly similar predictions with low levels of accuracy. Sophisticated models barely outperformed simple baselines, thus highlighting the limits of prediction even with significant modeling efforts.

Because social systems are reflexive, trouble arises when these categories are conflated. Once a model is public, people and institutions may respond to it — potentially altering the very behavior that the model seeks to describe. This reflexivity amplifies the ethical and political stakes of model use in policy settings. Exploratory models that double as explanations can be misleading. For instance, economist Thomas Schelling’s model of segregation [4] cannot serve as an account of U.S. housing patterns because it obscures the role of discriminatory policy and institutions. Strong predictive performance is sometimes taken to supersede explanatory insight, echoing claims that abundant data can replace theory [6]. Conversely, reliance on simple explanatory models for prediction can reduce accuracy in settings where predictive performance is paramount. However, all three types of models are valuable in the social sciences when matched transparently to their purpose; usefulness depends less on realism and more on clarity of aim.

Communicating Assumptions and Context

Every model that attempts to represent the world contains either implicit or explicit simplifying assumptions. Transparent modeling requires the clear communication of these assumptions. As such, modelers should be forthcoming about their choices and reflect on the potential influence of disciplinary and institutional contexts. For example, the possible features for inclusion in a predictive model often reflect subjective judgments by the creators in terms of factors that are relevant, measurable, and available.

In some cases, researchers may find it helpful to document modeling plans or assumptions in advance. While doing so is not always feasible—particularly in exploratory work—early transparency about motivations, simplified assumptions, and conceivable alternatives can clarify a model’s purpose and scope. This practice also reduces risks of overfitting or confirmation bias and improves interpretability and accountability.

Techniques such as documenting modeling decisions, sharing code and intermediate results, and inviting external critiques can also expose implicit choices. Furthermore, adversarial collaborations—where researchers with contrasting viewpoints collectively co-design and analyze a model—can clarify the way in which differing modeler assumptions may shape overall conclusions.

Matching Models to Stakes

Models of social systems can have real consequences when they inform policy or guide interventions. Responsible modeling hence requires careful attention to a model’s intended use and the harms that could arise if its purpose is misunderstood. Institutional data constraints—including administrative data that is collected for compliance rather than research, uneven population coverage, and systematic biases in who and what is measured—compound these risks.

As we noted earlier, scientists may misread exploratory models as explanations. Explanatory models like the susceptible-infected-recovered compartmental framework can inform public health decisions, but their conclusions depend on simplified assumptions that might overlook economic, social, or demographic realities. Predictive models, including those in the aforementioned Fragile Families Challenge, can shape interventions in people’s lives despite limited accuracy and known biases in race, gender, and other demographic attributes.

Modelers should therefore ask themselves the following questions: What are this model’s intended uses and likely misuses? Who might benefit, and who might be harmed or excluded? Could communication of the model itself cause harm? Reflection on these queries helps to align modeling practices with the social contexts and real-world stakes they seek to illuminate.

It is also important to remember that mathematical models can shape public discourse. When divorced from context, models can mislead, be co-opted for political ends, or create a false sense of certainty. During the COVID-19 pandemic, for example, models became lightning rods in public debate and were sometimes misunderstood or misrepresented by decision-makers and the general public. Stylized models of opinion dynamics or economic behavior have likewise appeared in editorials as if they were policy recommendations; some machine learning models have even claimed to predict criminality or sexuality from facial images alone, which lends visibility to unfounded and harmful ideas.

Although modelers cannot control their work’s reception, they can reduce misinterpretation by clearly stating their aims and assumptions, appropriately labeling exploratory models, and explicitly articulating limitations and sensitivity. Collaboration with domain experts supports proper interpretation, and engagement with affected communities reveals perspectives that may otherwise go unnoticed. Involving the individuals and communities that are most impacted by a model’s use can identify potential harms, clarify priorities, and foster accountability — ultimately aligning modeling efforts more closely with the lived realities they aim to represent.

Open Practices for Social Science Modeling

Mathematical modelers in the social sciences must grapple with forms of uncertainty that differ sharply from those in the physical sciences. Uncertainty in social systems is often dominated not only by sampling variation, but by measurement error, evolving population definitions, and institutional inconsistencies in data collection. Social phenomena typically stem from partial, biased, or institutionally defined data (e.g., census categories that shift over time, administrative records that are shaped by policy rather than measurement, or surveys with nonrepresentative samples). Because these uncertainties reflect both data limitations and social realities, modelers should make their assumptions visible, identify places where evidence is thin, and work with domain experts who understand the data.

Transparent practices also look different when humans and communities are the units of analysis. The sharing of code and documentation of modeling decisions remain essential, but unrestricted data release is often impossible when privacy, consent, and community ownership are at stake. In such cases, reproducibility comes from clear workflows, synthetic or heavily deidentified datasets, and multi-team modeling efforts that reveal conclusions’ dependencies on assumptions. Engaging with social scientists and affected stakeholders helps to ensure that transparency is an active part of responsible scientific practice.

Expanding Mathematical Modeling in the Social Sciences

As new forms of data, computation, and policy analysis become increasingly quantitative, mathematical modeling’s role in the social sciences will continue to grow. Because social systems are adapting to measurements and models, reflexivity is becoming a central challenge that is largely absent from the physical sciences; namely, models can shape the very behavior that they aim to describe. Populations that are affected by policy interventions are rarely stationary and often require mechanistic models that explicitly account for evolving contexts, incentives, and feedback. As these models take on more public weight, people will rightly demand to know what assumptions they make, what causes they claim to identify, and what consequences they carry. Without clear evidence of this type of understanding, mathematical models can quickly lose public trust.

Concluding Thoughts

The Modelers’ Hippocratic Oath, proposed after the 2008 financial crisis, begins with, “I will remember that I didn’t make the world, and it doesn’t satisfy my equations” [2]. The reasoning processes that surround models are not the same as the rationale about the systems they represent, but with appropriate caution—as Smaldino notes—even simple models can illuminate complex behaviors [5]. As modeling tools advance, so too must our commitment to responsible use.

The Casa Matemática Oaxaca Workgroup on Collective Social Phenomena includes Maximino Aldana (National Autonomous University of Mexico), Heather Zinn Brooks (Harvey Mudd College), Phil Chodrow (Middlebury College), Fillipe Georgiou (University of Bath), Joseph Johnson (Carleton College), Krešimir Josić (University of Houston), Zachary Kilpatrick (University of Colorado Boulder), Kath Landgren (Stanford University), Andrew Nugent (University College London), Maurizio Porfiri (New York University), Nancy Rodriguez (University of Colorado Boulder), Pablo Suárez-Serrato (National Autonomous University of Mexico), Roni Barak Ventura (New Jersey Institute of Technology), David White (Denison University), Alexander Wiedemann (Hamline University), and Sam Zhang (University of Vermont).

References

[1] Aldana, M., Ventura, R.B., Brooks, H.Z., Chodrow, P.S., Georgiou, F., Johnson, J., … Zhang, S. (2025). Modeling social systems: Transparency, reproducibility, and responsibility. Preprint, arXiv:2508.18542.

[2] Derman, E., & Wilmott, P. (2009). The financial modelers’ manifesto. Wilmott Magazine. Retrieved from https://wilmott.com/financial-modelers-manifesto.

[3] Salganik, M.J., Lundberg, I., Kindel, A.T., Ahearn, C.E., Al-Ghoneim, K., Almaatouq, A., … McLanahan, S. (2020). Measuring the predictability of life outcomes with a scientific mass collaboration. Proc. Natl. Acad. Sci., 117(15), 8398-8403.

[4] Schelling, T.C. (1971). Dynamic models of segregation. J. Math. Sociol., 1(2), 143-186.

[5] Smaldino, P.E. (2017). Models are stupid, and we need more of them. In R.R. Vallacher, S.J. Read, & A. Nowak (Eds.), Computational social psychology. New York, NY: Routledge.

[6] Watts, D.J. (2014). Common sense and sociological explanations. Am. J. Sociol., 120(2), 313-351.

Related Reading

Stay Up-to-Date with Email Alerts

Sign up for our monthly newsletter and emails about other topics of your choosing.